In today’s rapidly evolving financial landscape, the intersection of generative AI and financial risk management represents one of the most exciting frontiers in fintech. As someone who’s spent over two decades navigating the complexities of enterprise technology, I’ve watched cloud computing transform industries—but the revolution happening in financial services right now feels different. It’s not just about efficiency; it’s about reimagining how we understand, model, and respond to financial risk.

The Financial Risk Landscape: Why Traditional Approaches Fall Short

Traditional risk models in finance have relied heavily on statistical methods that assume normal distributions, linear relationships, and historical patterns that repeat. If you’ve worked in finance, you know the limitations:

- Historical data fails to capture “black swan” events

- Risk factors are increasingly interconnected in non-linear ways

- Market behavior can change faster than models can adapt

- Alternative data sources remain largely untapped

These challenges created a perfect opportunity for generative AI to step in, with its ability to model complex distributions, capture non-linear relationships, and incorporate diverse data types.

How Generative AI is Transforming Risk Modeling

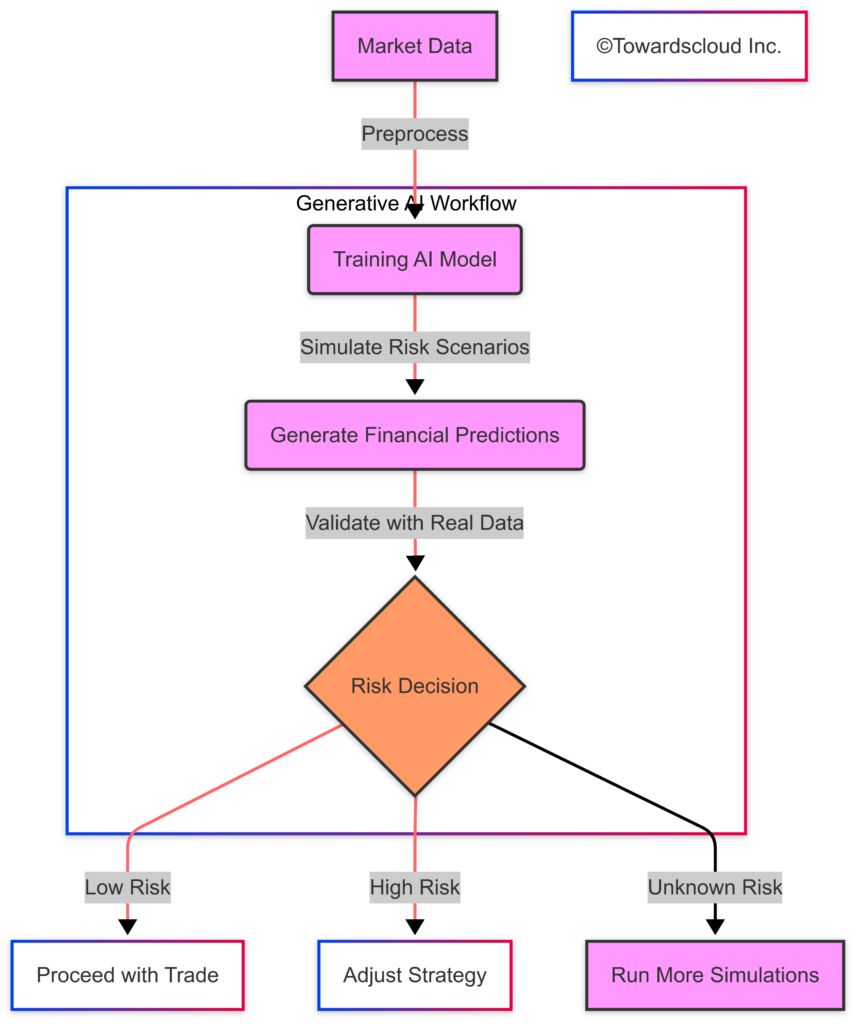

Unlike traditional machine learning that focuses on prediction based on historical patterns, generative AI can create new, previously unseen scenarios. This capability is revolutionary for risk management, where understanding the “unknown unknowns” is critical.

1. Monte Carlo Simulations on Steroids

Monte Carlo simulations have been the backbone of risk analysis for decades, but generative models take this approach to an entirely new level. Instead of generating random samples based on simplistic distributions, generative AI can:

- Create realistic market scenarios that preserve complex correlations between assets

- Model non-linear relationships without explicit programming

- Generate extreme but plausible stress test scenarios

- Continuously learn and improve from new market data

2. Synthetic Data Generation for Model Testing

One of the most powerful applications is creating synthetic financial datasets that mimic real-world market behavior:

- Testing trading strategies against generated market conditions

- Augmenting limited historical data for rare events

- Creating privacy-preserving datasets for regulatory compliance

- Simulating market reactions to unprecedented events

3. Natural Language Understanding for Market Sentiment

Generative AI excels at extracting insights from text data, opening up new dimensions for risk modeling:

- Processing earnings calls, news, and social media in real-time

- Quantifying market sentiment and its impact on volatility

- Identifying emerging risks before they appear in quantitative data

- Creating narrative risk scenarios that combine quantitative and qualitative factors

Building a Risk Modeling System with Generative AI

Let’s put theory into action with a risk modeling example using Generative Adversarial Networks (GANs) in Python.

We’ll use real-world financial data to generate simulated market scenarios and predict possible risks.

Step 1: Install Required Libraries

!pip install numpy pandas tensorflow matplotlib scikit-learnStep 2: Import Libraries

import numpy as np

import pandas as pd

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import matplotlib.pyplot as plt

from sklearn.preprocessing import MinMaxScalerStep 3: Load Financial Data

We will use historical stock market data (e.g., S&P 500 prices) for training.

# Load financial dataset (example: S&P 500)

df = pd.read_csv("sp500.csv") # Ensure you have a dataset

df["Date"] = pd.to_datetime(df["Date"])

df.set_index("Date", inplace=True)

# Normalize the data

scaler = MinMaxScaler()

df_scaled = scaler.fit_transform(df[["Close"]])Step 4: Define the GAN Architecture

A GAN consists of:

- Generator – Creates synthetic financial data.

- Discriminator – Evaluates whether data is real or fake.

# Generator Model

def build_generator():

model = keras.Sequential([

layers.Dense(64, activation="relu", input_dim=100),

layers.Dense(128, activation="relu"),

layers.Dense(256, activation="relu"),

layers.Dense(1, activation="tanh")

])

return model

# Discriminator Model

def build_discriminator():

model = keras.Sequential([

layers.Dense(256, activation="relu", input_dim=1),

layers.Dense(128, activation="relu"),

layers.Dense(64, activation="relu"),

layers.Dense(1, activation="sigmoid")

])

return modelStep 5: Train the GAN

# Initialize Models

generator = build_generator()

discriminator = build_discriminator()

# Compile Discriminator

discriminator.compile(loss="binary_crossentropy", optimizer="adam", metrics=["accuracy"])

# Combined Model

discriminator.trainable = False

gan_input = keras.Input(shape=(100,))

fake_data = generator(gan_input)

gan_output = discriminator(fake_data)

gan = keras.Model(gan_input, gan_output)

gan.compile(loss="binary_crossentropy", optimizer="adam")

# Training Loop

epochs = 1000

batch_size = 32

for epoch in range(epochs):

noise = np.random.normal(0, 1, (batch_size, 100))

generated_data = generator.predict(noise)

real_data = df_scaled[np.random.randint(0, df_scaled.shape[0], batch_size)]

labels_real = np.ones((batch_size, 1))

labels_fake = np.zeros((batch_size, 1))

d_loss_real = discriminator.train_on_batch(real_data, labels_real)

d_loss_fake = discriminator.train_on_batch(generated_data, labels_fake)

noise = np.random.normal(0, 1, (batch_size, 100))

g_loss = gan.train_on_batch(noise, np.ones((batch_size, 1)))

if epoch % 100 == 0:

print(f"Epoch {epoch}: Generator Loss: {g_loss}, Discriminator Loss: {d_loss_real[0] + d_loss_fake[0]}")Cloud Implementation Comparison: AWS vs. Azure vs. GCP

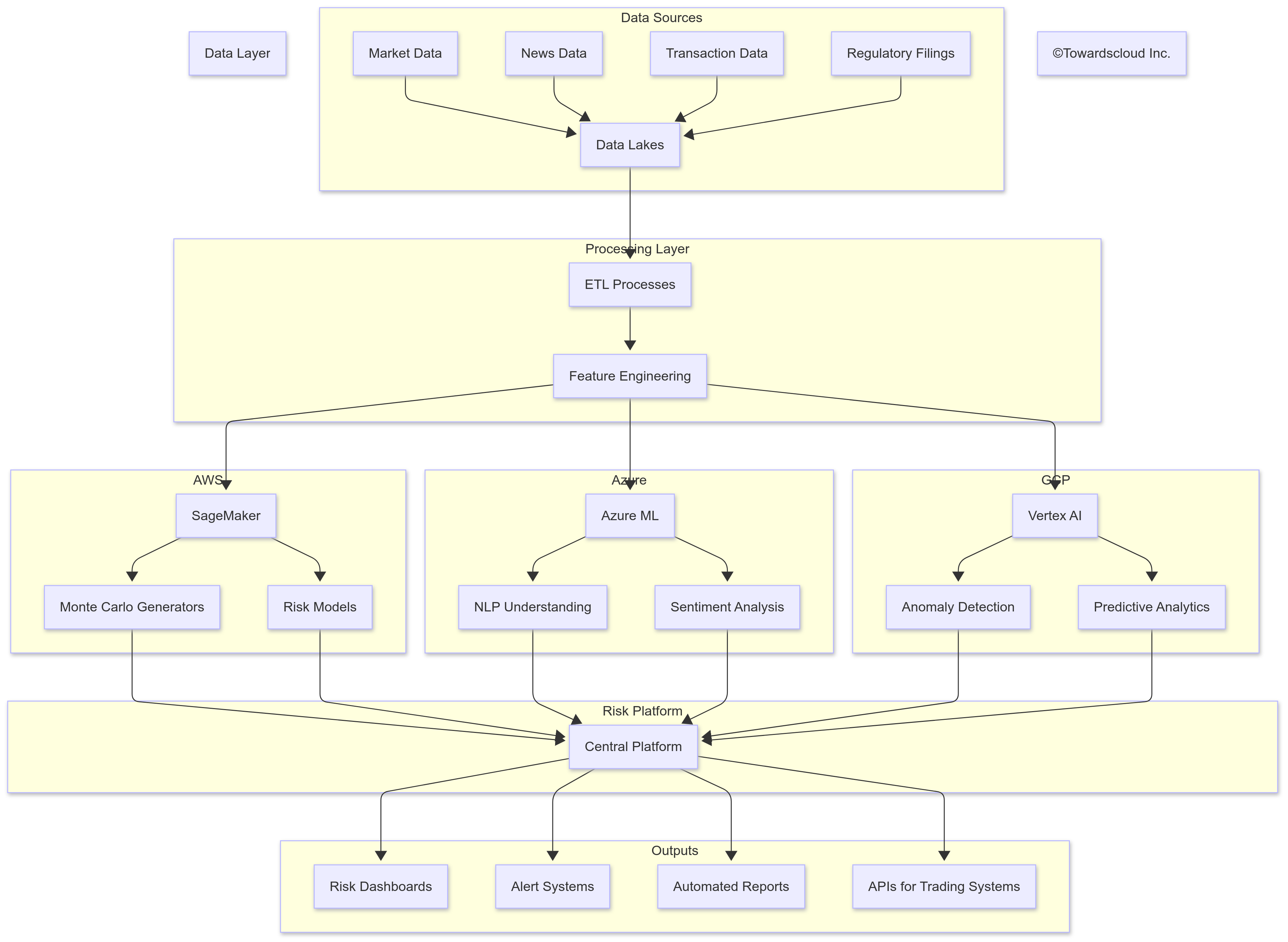

Let’s look at how the major cloud providers support generative AI for financial risk modeling, each with their unique strengths:

AWS Financial Services AI Stack

AWS offers a comprehensive suite for financial risk modeling:

- Amazon SageMaker: Provides the infrastructure for training and deploying generative models

- AWS FinSpace: Purpose-built for financial analytics with built-in compliance controls

- Amazon Comprehend: Analyzes financial text data for sentiment and key information extraction

- AWS Batch: Handles massive parallel Monte Carlo simulations efficiently

AWS’s approach emphasizes security, compliance, and the ability to handle enormous computational workloads—making it particularly popular with large financial institutions with strict regulatory requirements.

Azure Financial Services Solutions

Microsoft’s offerings focus on integration and accessibility:

- Azure Machine Learning: Supports generative model training with robust governance

- Azure Synapse Analytics: Provides unified analytics for financial data processing

- Azure Cognitive Services: Offers pre-built AI capabilities for document and language understanding

- Azure OpenAI Service: Provides access to powerful generative models with financial services controls

Azure’s strength lies in enterprise integration and the familiar development environment for financial services teams already using Microsoft tools.

Google Cloud Financial Services

Google Cloud brings its AI research expertise to financial services:

- Vertex AI: Provides an end-to-end platform for building generative models

- BigQuery ML: Allows running ML models directly against financial data in the data warehouse

- Document AI: Processes financial documents with high accuracy

- Dataplex: Manages diverse financial data types in a unified platform

Google’s offerings benefit from the company’s deep research expertise in generative AI and tend to excel at handling unstructured data and providing innovative approaches to financial modeling.

Practical Implementation: Risk Modeling Code Examples

Let’s explore some practical code examples for implementing generative AI-based risk models across different cloud platforms.

Example 1: Synthetic Market Data Generation with AWS SageMaker

This example uses a Generative Adversarial Network (GAN) to create synthetic financial time series data:

This model can generate thousands of realistic market scenarios, preserving the correlation structure between different assets while creating plausible stress test conditions.

Example 2: NLP-Enhanced Risk Assessment with Azure

This example shows how to use Azure’s services to incorporate news sentiment into your risk models:

This approach allows your risk models to dynamically adjust based on market sentiment, capturing risks that might not yet be reflected in price movements.

Example 3: Anomaly Detection in Trading Patterns with GCP

This example uses Google Cloud’s Vertex AI to detect unusual trading patterns that could represent risk:

This system continuously monitors trading patterns, alerting risk managers to unusual activity that might indicate market manipulation, technical issues, or emerging market stress.

Multi-Cloud Financial Risk Architecture

Beyond Risk Modeling: Other Financial Applications

While risk modeling is a perfect fit for generative AI, the technology extends to many other areas of finance:

1. Personalized Financial Advice

Generative AI can provide tailored financial guidance by:

- Understanding client goals and risk tolerance from natural language conversations

- Generating personalized investment strategies based on market conditions and client circumstances

- Creating easy-to-understand explanations of complex financial concepts

- Adapting recommendations as markets change or client situations evolve

2. Algorithmic Trading Enhancement

Trading algorithms benefit from generative AI in multiple ways:

- Predicting market reactions to specific events or news

- Generating trading signals based on multi-modal data

- Creating synthetic market scenarios for strategy backtesting

- Adapting to changing market regimes without manual intervention

3. Regulatory Compliance and Reporting

Compliance teams are finding generative AI invaluable for:

- Automating the creation of regulatory reports with natural language

- Analyzing policy documents to identify compliance requirements

- Generating explanations for model decisions to satisfy regulators

- Creating synthetic but realistic test data for compliance validation

Implementation Challenges and Best Practices

Despite the potential, implementing generative AI for financial risk management comes with significant challenges:

1. Explainability Requirements

Financial regulators increasingly require that risk models be explainable. To address this:

- Implement parallel interpretable models alongside generative models

- Develop visualization tools that help explain model outputs

- Maintain comprehensive documentation of model design and training data

- Create narrative explanations of risk scenarios generated by the models

2. Data Quality and Bias Concerns

Financial models are only as good as their data:

- Audit training data for biases that could affect risk assessments

- Implement continuous monitoring

Conclusion: Embracing the Generative Revolution in Finance

Generative AI is not just another tool in the financial technology arsenal – it represents a fundamental shift in how we approach financial data, risk, and decision-making. By moving beyond rule-based systems to models that can understand context, learn complex relationships, and generate novel outputs, we’re entering an era of more adaptive, nuanced financial analysis.

Each cloud provider brings different strengths to financial generative AI: AWS with its specialized FinSpace service and comprehensive ML stack, Azure with its enterprise integration and governance tools, and Google Cloud with its powerful data processing capabilities and cutting-edge AI research. Financial institutions can leverage these platforms based on their specific needs and existing technology ecosystems.

The journey toward generative AI-powered finance comes with significant challenges – data privacy, regulatory compliance, model explainability, and ethical considerations chief among them. However, the potential benefits in risk management, operational efficiency, and customer experience make this a transformation worth pursuing.

As we move forward, the most successful implementations will be those that thoughtfully integrate these new capabilities into existing workflows while maintaining human oversight at critical decision points. The future of finance isn’t about replacing human judgment with AI, but about creating powerful partnerships that combine the best of both.

For financial institutions looking to begin this journey, my advice is simple: start with well-defined problems, invest in data infrastructure, build cross-functional teams, and embrace a culture of continuous learning and adaptation. The generative AI revolution in finance is just beginning, and those who engage thoughtfully now will be best positioned to thrive in this new landscape.

This article is part of our ongoing series exploring the intersection of cloud technologies and genAI innovation. For more insights on implementing cloud solutions, visit TowardsCloud.com.