In today’s rapidly evolving technological landscape, generative AI has emerged as a transformative force reshaping how businesses operate. As an IT professional navigating this exciting terrain, understanding how the major cloud providers implement generative AI capabilities can be crucial for making informed decisions. Let’s dive into a comprehensive comparison of how AWS, Azure, and Google Cloud Platform (GCP) approach generative AI, examining their strengths, limitations, and unique offerings.

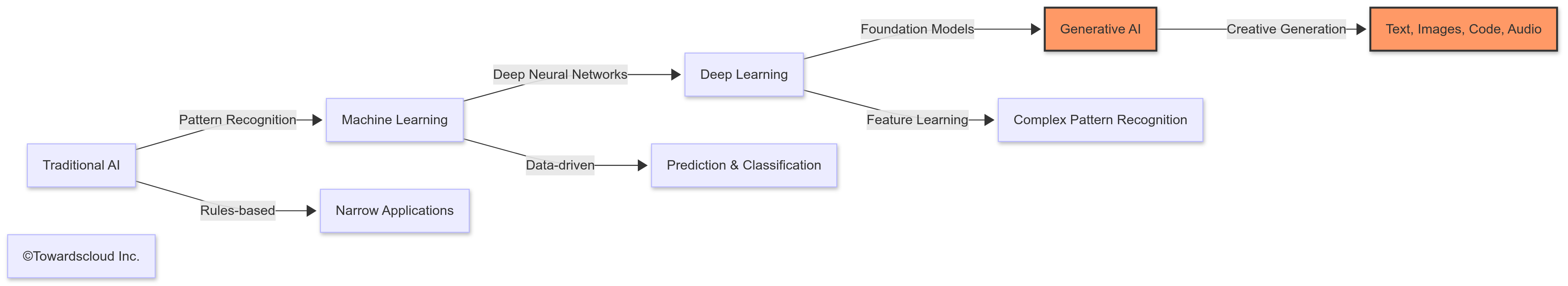

The Generative AI Revolution

Generative AI represents a paradigm shift in how we interact with technology. Unlike traditional systems that follow explicit programming, generative AI creates new content, from text and images to code and music. This technology has found applications across industries—from content creation and customer service to drug discovery and software development.

Let’s examine how each cloud provider has built their generative AI platforms, looking at their foundation models, development tools, and specialized services.

Foundation Models: The Building Blocks

Each cloud provider offers access to foundation models—large language models (LLMs) and multimodal models trained on vast datasets that can be customized for specific tasks through fine-tuning or prompting.

| Provider | Foundation Models | Key Features | Pricing Model |

|---|---|---|---|

| AWS | Amazon Bedrock (Claude, Mistral, Llama, Titan, etc.) | Multi-model marketplace approach, simplified API access, pay-per-use pricing | Pay-per-token, volume discounts |

| Azure | Azure OpenAI Service (GPT-4, DALLE-3, Whisper), Azure AI Studio | Deep OpenAI integration, enterprise security features, compliance tools | Consumption-based pricing with tiered rates |

| GCP | Vertex AI (Gemini Pro, Gemini Ultra, PaLM 2, Imagen) | Google’s proprietary models, multimodal capabilities, research alignment | Token-based pricing with discounts for committed use |

AWS Bedrock: The Marketplace Approach

AWS takes a marketplace approach with Amazon Bedrock, offering a unified API that provides access to models from multiple providers, including Anthropic’s Claude, Meta’s Llama, Mistral, and Amazon’s own Titan models. This variety gives developers flexibility to choose the best model for their specific use case, whether that’s content generation, summarization, or conversational AI.

A real-world example is how Cvent, an event management platform, used Amazon Bedrock to enhance its event recommendation engine. By using Claude models through Bedrock, Cvent was able to generate personalized event suggestions based on attendee profiles and past behavior, resulting in a 32% increase in session attendance.

Azure OpenAI Service: The Microsoft-OpenAI Alliance

Microsoft’s deep partnership with OpenAI gives Azure a unique advantage in the generative AI space. Through Azure OpenAI Service, developers gain access to OpenAI’s cutting-edge models like GPT-4 and DALLE-3 with the security, compliance, and scalability features of Azure.

Consider how Coca-Cola leveraged Azure OpenAI Service to reinvent its marketing approach. Using GPT-4, they created an AI system that could generate marketing copy in the distinctive “Coca-Cola voice” while maintaining brand consistency across 200+ markets, reducing content creation time by 70%.

Google Cloud Vertex AI: The Research Powerhouse

Google Cloud’s Vertex AI platform provides access to Google’s proprietary models, including the Gemini family (Pro and Ultra), PaLM 2, and Imagen. These models reflect Google’s research heritage and offer strong performance in multimodal tasks.

An illuminating example is how Wayfair uses Vertex AI with Gemini models to transform their product catalog management. By implementing a system that automatically generates rich product descriptions from images and basic metadata, they’ve increased their catalog processing efficiency by 4x while improving search relevance.

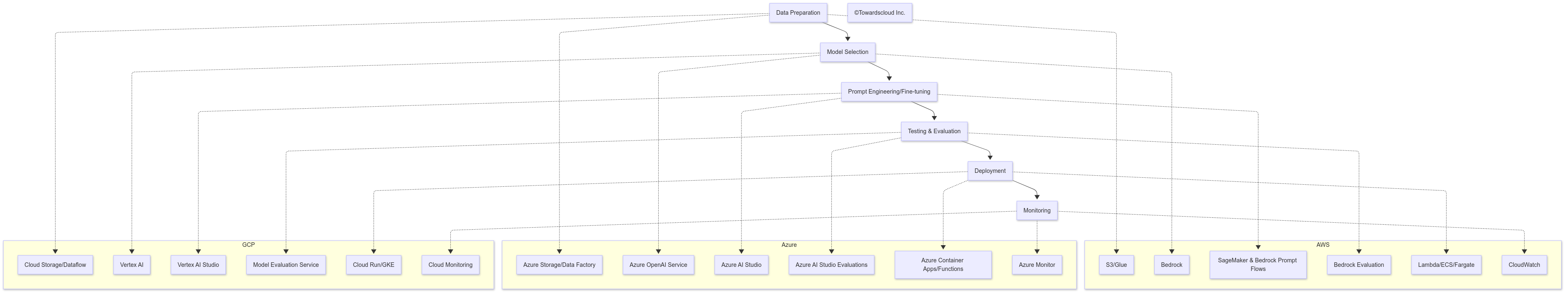

Development Environments: Building GenAI Applications

Cloud providers have recognized that generative AI requires specialized development environments and have created tools to streamline the building, testing, and deployment of AI applications.

AWS AI Development Tools

AWS offers a comprehensive set of tools for AI development:

- SageMaker Studio: Integrated development environment for machine learning

- Bedrock Prompt Flows: Visual interface for creating complex prompt chains

- CodeWhisperer: AI coding assistant for developers

- Amazon Q: Enterprise conversational assistant for developers

For instance, Peloton uses AWS SageMaker and Bedrock to develop personalized workout recommendation systems. Their data scientists build model pipelines in SageMaker and leverage Bedrock’s prompt engineering tools to create a natural language interface that helps users find workouts matching their specific goals and preferences.

Azure AI Foundry

Microsoft’s Azure AI Foundry provides:

- Prompt Flow: Visual tool for building and deploying LLM applications

- AI Studio Playground: Interactive environment for prompt engineering

- Model Fine-tuning: Tools for customizing models with domain-specific data

- GitHub Copilot: AI pair programming tool

Take the case of Walgreens, which utilized Azure AI Studio to create an AI pharmacist assistant. Using Prompt Flow, they designed a conversational system that could answer customer questions about medications while adhering to strict healthcare regulations. The visual development environment allowed their pharmacists to directly contribute to the AI’s design without deep technical expertise.

Google Vertex AI Studio

Google Cloud’s development environment includes:

- Vertex AI Studio: End-to-end platform for developing generative AI applications

- Model Garden: Curated collection of foundation models

- Gemini API: Simplified access to Google’s flagship AI models

- Duet AI: AI assistant integrated across Google Cloud services

Consider how The New York Times used Vertex AI Studio to develop an AI-powered content recommendation system. By combining structured user data with Gemini’s natural language understanding capabilities, they created a system that recommends articles based on subtle content themes rather than just keywords, increasing reader engagement by 27%.

Specialized Generative AI Services

Beyond foundation models and development tools, each cloud provider offers specialized services that address specific generative AI use cases.

| Category | AWS | Azure | GCP |

|---|---|---|---|

| Document Intelligence | Amazon Textract + Bedrock | Azure Document Intelligence | Document AI + Vertex AI |

| Conversational AI | Amazon Lex + Bedrock | Azure Bot Service + OpenAI | Dialogflow CX + Vertex AI |

| Code Generation | Amazon CodeWhisperer | GitHub Copilot | Duet AI for Developers |

| Image Generation | Bedrock with Stable Diffusion | DALLE-3 via Azure OpenAI | Imagen on Vertex AI |

| Enterprise Assistant | Amazon Q | Microsoft Copilot | Duet AI |

| Multimodal Search | Amazon Kendra + Bedrock | Azure Cognitive Search + OpenAI | Vertex AI Search |

Let’s examine a few of these specialized services in detail:

Document Intelligence

Each cloud provider has solutions for extracting insights from documents:

- AWS: Combines Amazon Textract for document processing with Bedrock for summarization and insight generation

- Azure: Document Intelligence (formerly Form Recognizer) with Azure OpenAI for contextual understanding

- GCP: Document AI with Gemini models for complex document understanding

A practical example is how JP Morgan Chase uses Azure Document Intelligence with OpenAI models to process mortgage applications. The system extracts structured data from various document formats and uses generative AI to create comprehensive summaries for loan officers, reducing processing time from days to hours.

Conversational AI Platforms

Building AI assistants and chatbots is a primary use case for generative AI:

- AWS: Amazon Lex enhanced with Bedrock models for more natural conversations

- Azure: Bot Framework + Azure OpenAI for sophisticated dialog management

- GCP: Dialogflow CX combined with Vertex AI for context-aware conversations

Consider how Marriott International implemented a customer service chatbot using Google’s Dialogflow CX with Gemini models. The system handles complex, multi-turn conversations about reservations, loyalty programs, and local recommendations, resolving 67% of customer inquiries without human intervention.

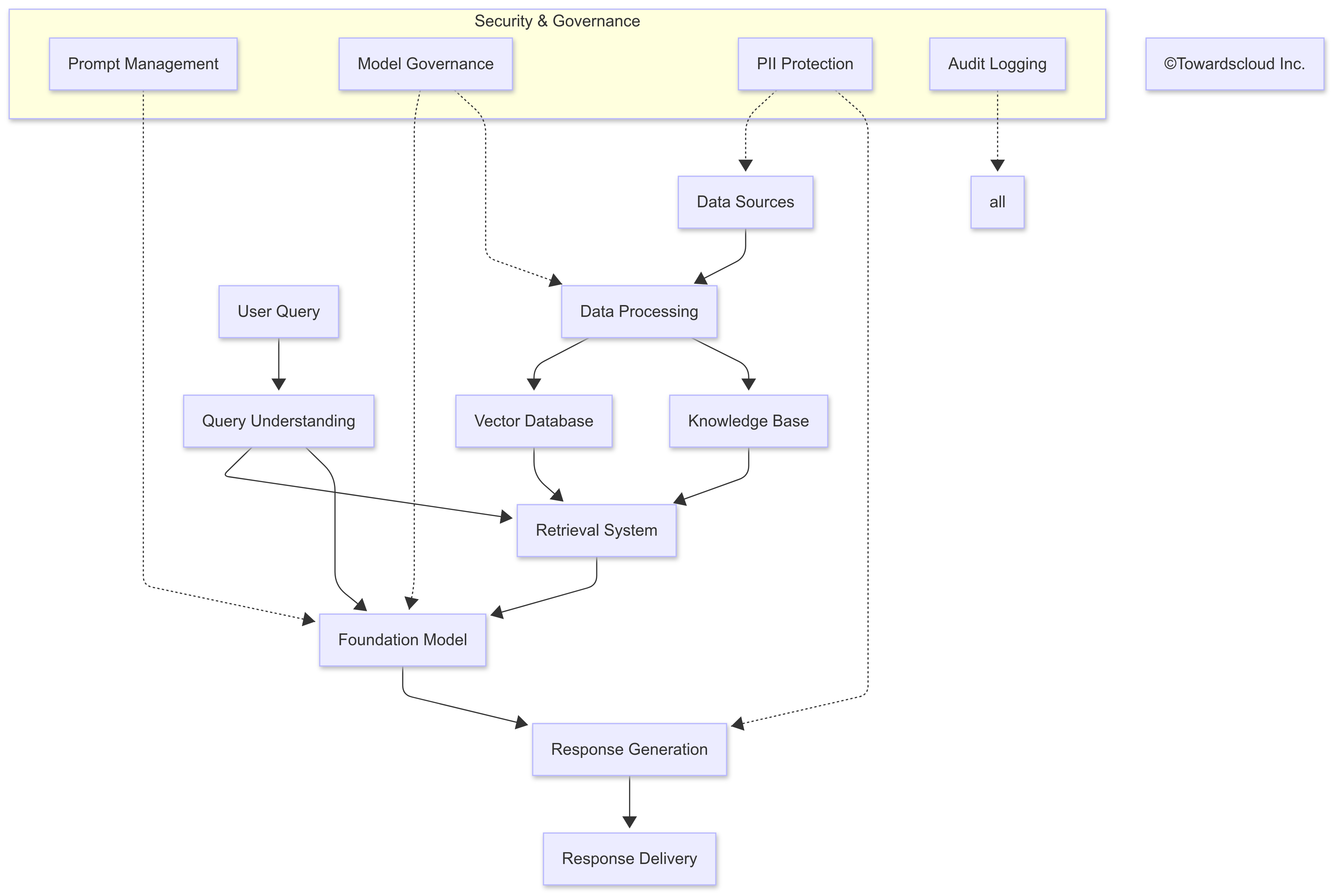

Infrastructure and Integration: The Foundation for Enterprise GenAI

The true value of cloud-based generative AI comes from how well it integrates with existing systems and infrastructure.

Integration Capabilities

Here’s how each provider approaches the integration challenge:

AWS:

- Extensive service integrations between Bedrock and other AWS services

- AWS Lambda functions for serverless AI processing

- Amazon EventBridge for event-driven AI applications

- Step Functions for orchestrating complex AI workflows

A powerful example is how Netflix uses AWS to power its content recommendation engine. By combining Amazon Personalize with Bedrock models, they’ve created a system that not only recommends content based on viewing history but can also generate natural language explanations for why a particular show was recommended.

Azure:

- Tight integration with Microsoft 365 ecosystem

- Logic Apps for workflow automation with AI capabilities

- Power Platform for low-code AI applications

- Semantic Kernel for building AI plugins and skills

Consider how Spotify leverages Azure’s integration capabilities to enhance its podcast discovery features. Using Azure OpenAI Service connected to their content database, they generate detailed episode summaries and thematic analyses that help listeners find content matching their interests.

GCP:

- Integrated with Google Workspace

- Workflows for serverless orchestration

- API Gateway for managed API access to AI services

- Dataflow for large-scale data processing for AI

An illustrative case is how Airbnb uses GCP’s integration capabilities to enhance their property descriptions. By combining Vertex AI with their existing property database and image repository, they automatically generate rich, accurate property descriptions that highlight the unique features of each listing.

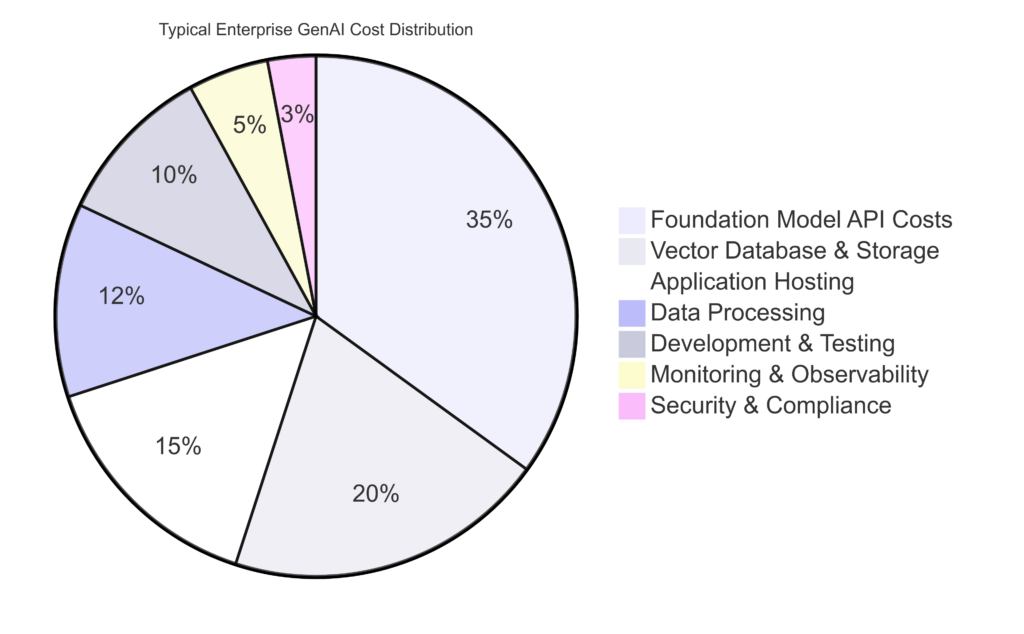

Cost Comparison: The Bottom Line

When evaluating cloud platforms for generative AI, cost is a critical factor. Let’s break down the pricing approaches:

| Provider | Model Category | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) | Other Factors |

|---|---|---|---|---|

| AWS Bedrock | Claude 3 Opus | $15 | $75 | Volume discounts available |

| Claude 3 Sonnet | $8 | $24 | ||

| Titan Text | $3 | $6 | ||

| Mistral Large | $7 | $20 | ||

| Azure OpenAI | GPT-4 Turbo | $10 | $30 | Enterprise agreements can lower costs |

| GPT-4 | $30 | $60 | ||

| GPT-3.5 Turbo | $0.50 | $1.5 | ||

| DALLE-3 | $2 per image | N/A | ||

| GCP Vertex AI | Gemini 2.0 Pro | $7 | $21 | Committed use discounts available |

| Gemini 2.0 Flash | $2 | $6 | ||

| Gemini Ultra | $20 | $60 | ||

| Imagen 3 | $3 per image | N/A |

Beyond the raw token pricing, it’s essential to consider:

- Infrastructure costs: Running vector databases, storing embeddings, and managing application servers

- Data transfer costs: Moving data between services and out to users

- Storage costs: Storing model artifacts, prompt templates, and generated content

- Development costs: Tools and environments for building and testing AI applications

Governance and Responsible AI

As generative AI becomes more deeply embedded in business processes, governance and responsible AI practices become increasingly important.

| Feature | AWS | Azure | GCP |

|---|---|---|---|

| Content Filtering | Bedrock Guardrails | Azure AI Content Safety | Vertex AI Safety |

| Model Cards | Yes | Yes | Yes |

| Prompt Management | Bedrock Prompt Management | Azure AI Studio Prompt Flow | Vertex AI Prompt Library |

| Usage Monitoring | CloudWatch + CloudTrail | Azure Monitor | Cloud Monitoring |

| Responsible AI Guidelines | AWS Responsible AI Policy | Microsoft Responsible AI Standard | Google AI Principles |

| Compliance Tools | AWS Compliance Programs | Azure Compliance Manager | GCP Compliance Resource Center |

Case Study: Financial Services Compliance

Consider how a major financial institution implemented generative AI governance across different cloud platforms:

- On AWS: Used Bedrock Guardrails to implement content filtering that prevents the generation of financial advice not approved by compliance

- On Azure: Leveraged Azure OpenAI’s content filters with custom categories specific to financial regulations

- On GCP: Implemented Vertex AI Safety filters with custom prompt templates designed to enforce compliance standards

The institution found that while all platforms offered robust governance capabilities, Azure’s deeper integration with Microsoft Purview provided additional data governance advantages for their heavily Microsoft-oriented environment.

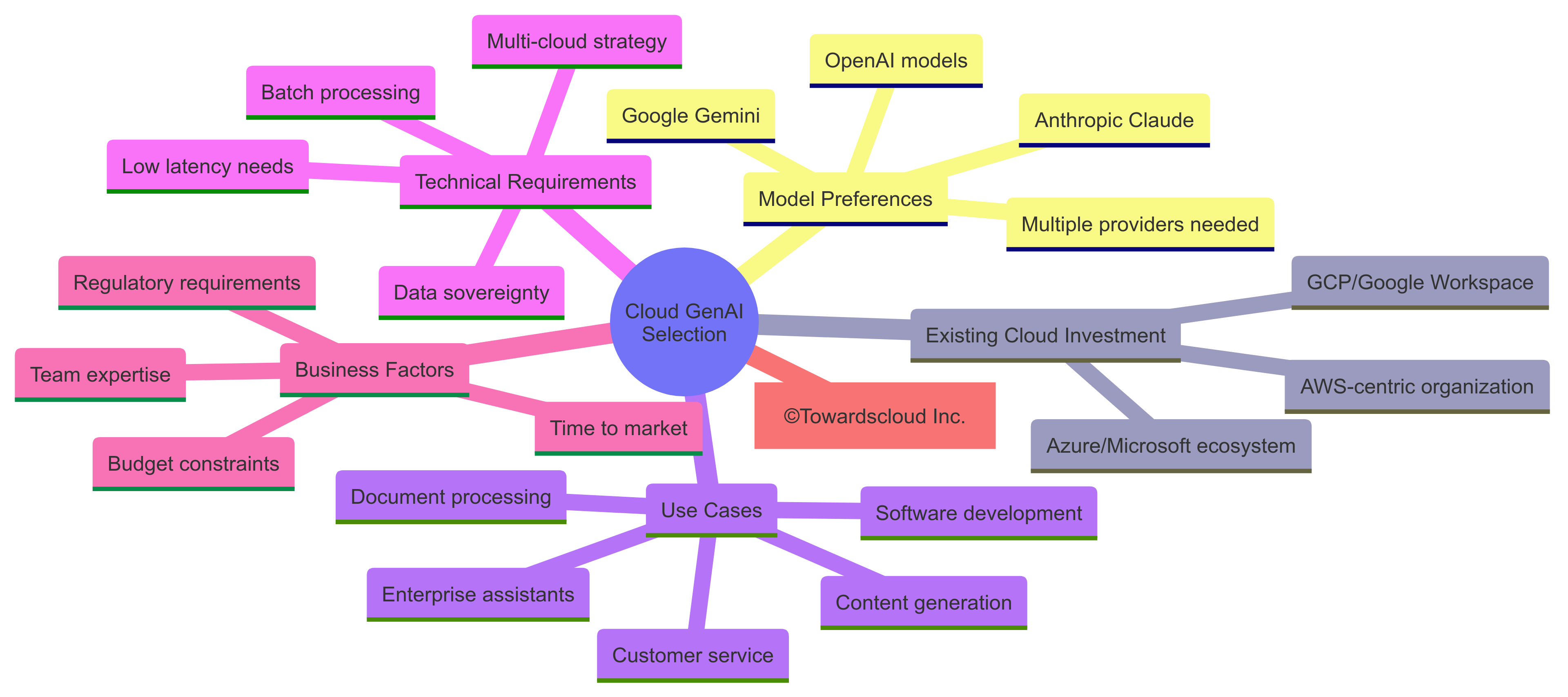

Choosing the Right Platform: Decision Framework

When selecting a cloud platform for generative AI, consider these factors:

Recommendations Based on Scenarios

If your organization is primarily Microsoft-focused: Azure offers the tightest integration with Microsoft 365 and the Microsoft development ecosystem. The combination of Azure OpenAI Service with Power Platform provides a powerful low-code approach to building generative AI applications.

If you need access to multiple foundation models: AWS Bedrock’s marketplace approach gives you the flexibility to experiment with and use different models through a single API, making it ideal for organizations that want to compare model performance or use different models for different tasks.

If advanced multimodal capabilities are critical: Google’s Vertex AI with Gemini models offers some of the strongest multimodal capabilities, particularly for applications that need to understand and generate content across text, images, and structured data.

If developer experience is paramount: All three platforms offer strong developer experiences, but Azure’s combination of OpenAI models with GitHub Copilot and low-code tools gives it an edge for organizations looking to empower both professional developers and citizen developers.

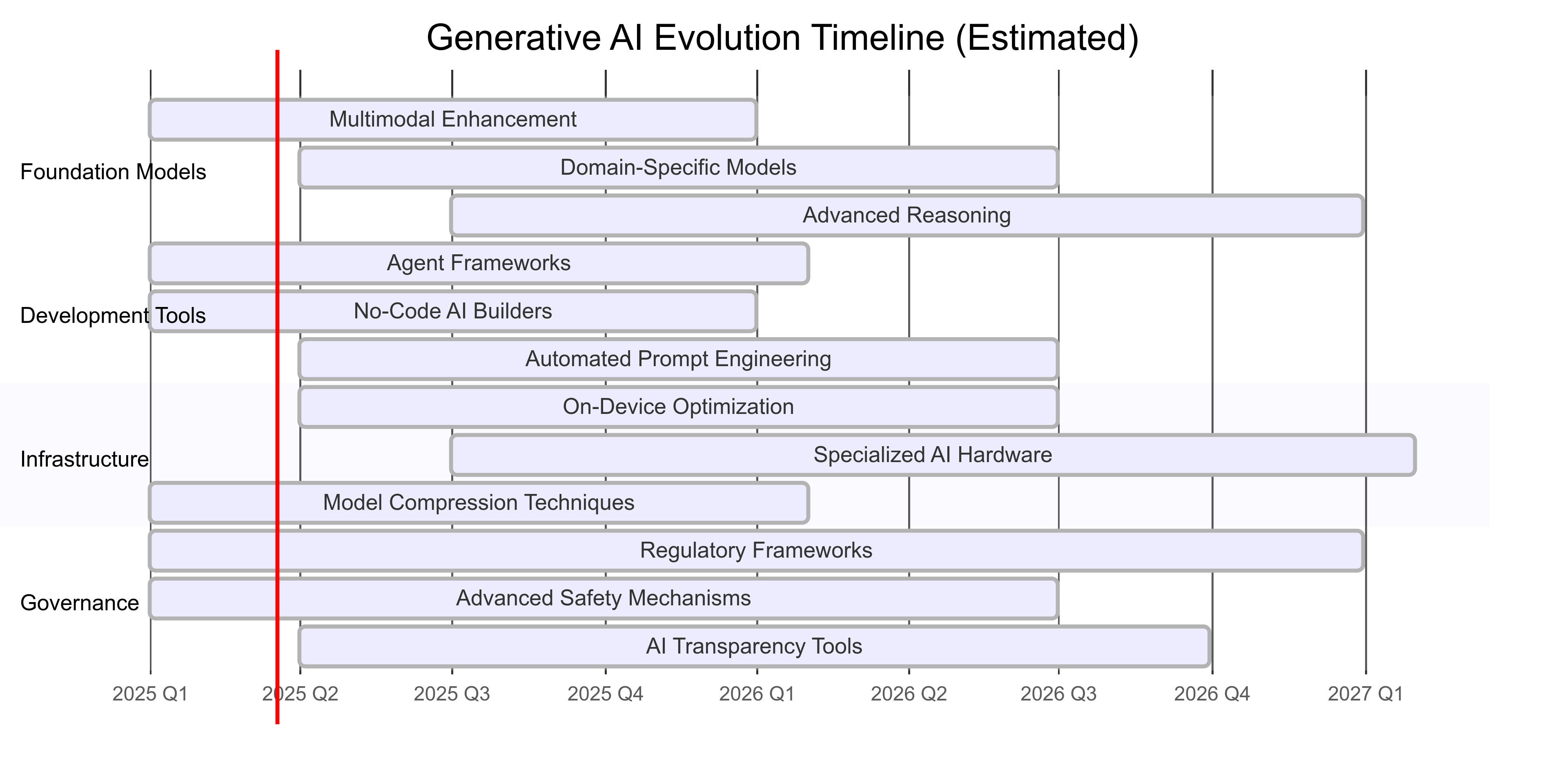

Future Directions: What’s Coming Next

The generative AI landscape is evolving rapidly. Here are emerging trends to watch:

- Specialized industry models: Cloud providers are developing foundation models optimized for specific industries like healthcare, finance, and manufacturing

- Enhanced multimodal capabilities: Next-generation models will process and generate across more modalities (text, images, audio, video) with greater coherence

- Agent frameworks: Tools for building autonomous AI agents that can perform complex tasks with minimal human intervention

- On-device inference: Smaller, optimized models that can run directly on edge devices

- Advanced reasoning capabilities: Models with improved logical reasoning and planning capabilities

Conclusion: Making Your Cloud GenAI Choice

The choice between AWS, Azure, and GCP for generative AI isn’t simply about technical capabilities—it’s about alignment with your organization’s existing investments, skills, and strategic direction.

For organizations that value model diversity and flexibility, AWS Bedrock offers the broadest marketplace of foundation models. Those deeply invested in the Microsoft ecosystem will find Azure OpenAI Service provides the most seamless integration. Companies looking for cutting-edge multimodal capabilities may lean toward Google Cloud’s Vertex AI with Gemini models.

The most successful generative AI implementations often combine the strengths of multiple platforms, using a best-of-breed approach that leverages each provider’s unique advantages while maintaining a cohesive architecture.

As you embark on your generative AI journey, remember that the technology is evolving rapidly. The cloud provider landscape will continue to change as models improve, new capabilities emerge, and pricing models evolve. Building flexibility into your architecture will be key to adapting to this dynamic environment.

What’s your current cloud provider for AI workloads? Are you considering a multi-cloud approach for generative AI? Share your thoughts and experiences in the comments below!

This comprehensive comparison aims to provide IT professionals with a clear understanding of how AWS, Azure, and GCP implement generative AI capabilities. While we’ve covered the major aspects, the field is evolving rapidly, and new features are being released regularly. Always check the latest documentation from each provider for the most up-to-date information and Towardscloud.com.