In the rapidly evolving landscape of artificial intelligence, Google Cloud Platform (GCP) has positioned itself as a formidable player in providing comprehensive services for generative AI. As organizations seek to harness the power of this transformative technology, understanding GCP’s unique offerings becomes crucial for making informed decisions. Let’s explore the rich ecosystem of generative AI services that Google Cloud brings to the table .

The Foundation of Google’s Generative AI Strategy Google’s approach to generative AI is built upon decades of research in machine learning, natural language processing, and computer vision . Their strategy leverages this deep expertise to create accessible, powerful, and responsible AI tools for businesses of all sizes.

The Foundation of Google’s Generative AI Strategy

Google’s approach to generative AI is built upon decades of research in machine learning, natural language processing, and computer vision . Their strategy leverages this deep expertise to create accessible, powerful, and responsible AI tools for businesses of all sizes .

Gemini: Google’s Most Advanced AI Models

At the heart of Google Cloud’s generative AI offerings is Gemini, their newest and most capable family of multimodal AI models from Google DeepMind . Gemini can understand virtually any input, including text, images, video, and code, and generate almost any output .

Gemini Model Variants

Gemini is available in several configurations, each optimized for different needs :

- Gemini 2.0 Pro: A versatile model offering strong performance across a wide range of tasks, including code generation, logical reasoning, and accessing world knowledge. It boasts a large context window for processing longer inputs .

- Gemini 2.0 Flash: Optimized for speed and efficiency, making it suitable for real-time interactions and high-volume processing while maintaining strong multimodal understanding .

- Gemini 2.0 Flash-Lite: Further optimized for cost efficiency and low latency in high-throughput scenarios .

- Gemini 1.5 Pro: Excels in processing exceptionally long sequences of information with its extraordinary long-context understanding capabilities .

- Gemini 1.5 Flash: Provides a balance of speed and versatility for a broad spectrum of tasks and modalities .

- Gemini Deep Research: AI-powered tool that allows users to conduct in-depth research on various topics, generating comprehensive reports with key findings and links to original sources.

A real-world example of Gemini’s impact comes from Priceline, which implemented Gemini to enhance its customer support operations . By developing a Gemini-powered assistant, Priceline improved the accuracy of responses to complex travel inquiries .

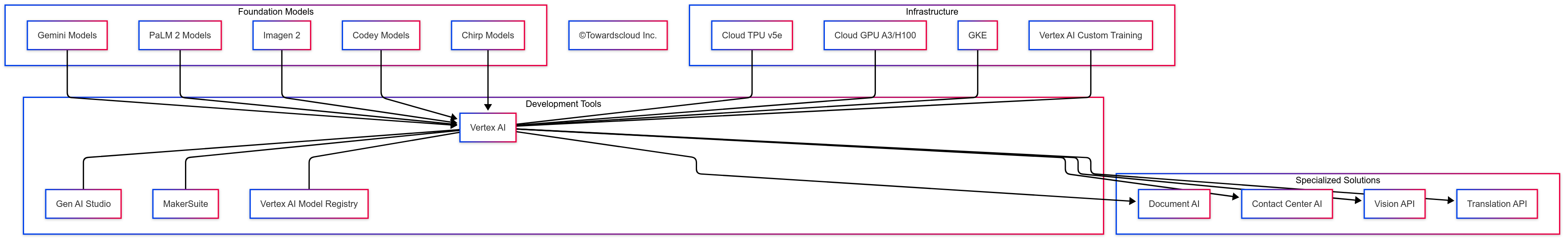

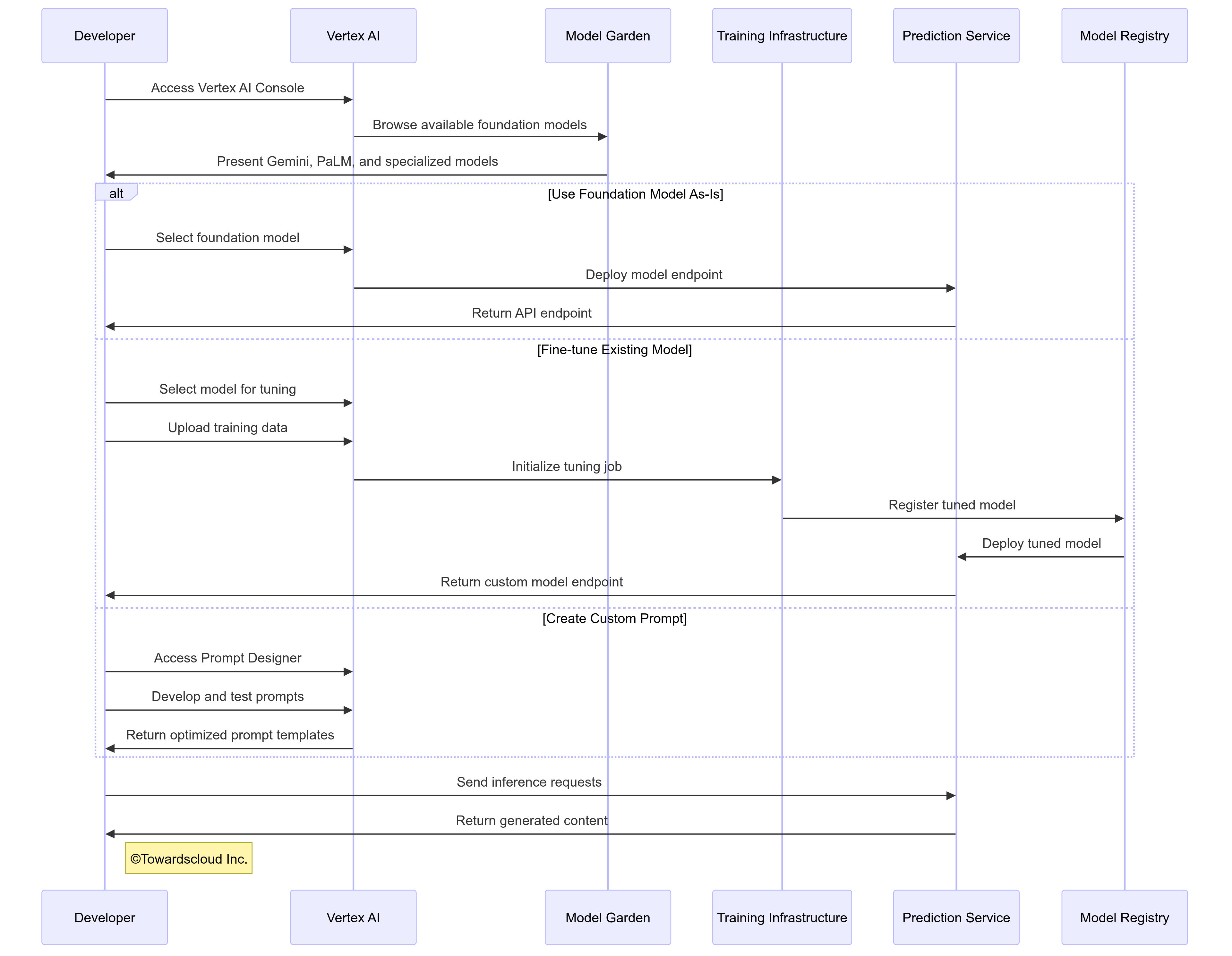

Vertex AI: The Unified Platform for AI Development

Vertex AI serves as Google Cloud’s fully managed, unified platform for building, deploying, and managing machine learning models, with a significant focus on generative AI capabilities . It streamlines the entire generative AI lifecycle, offering a centralized hub for various functionalities .

Key Vertex AI Features for Generative AI

Vertex AI offers several specialized tools that make generative AI more accessible and practical for businesses :

1. Model Garden

Model Garden provides a unified interface to access and experiment with various foundation models, including:

- Gemini models

- Imagen for image generation

- Gemma, a family of open models built with the same research as Gemini

- Third-party models like Anthropic’s Claude Model Family This “try before you buy” approach allows developers to evaluate models without immediate commitment, significantly reducing the risk associated with AI implementation .

This “try before you buy” approach allows developers to evaluate models without immediate commitment, significantly reducing the risk associated with AI implementation.

2. Vertex AI Studio

Vertex AI Studio offers an intuitive, web-based, no-code environment for prompt design, testing, and deployment of generative AI models . This democratizes access to generative AI by enabling business users without deep technical expertise to create effective prompts and deploy them in production scenarios .

A client recently used Vertex AI Studio to develop a system that generates detailed maintenance procedures based on equipment inspection reports. Their engineering team, with minimal AI expertise, was able to create an effective system in just three weeks, compared to the several months originally estimated for a custom development approach.

3. Tuning and Customization

Vertex AI provides multiple approaches to customize foundation models to your specific needs :

- Parameter-efficient tuning: Fine-tune models with minimal computational resources.

- Context tuning: Provide additional context to guide model responses.

- Grounding: Connect models to your specific data sources for factual accuracy using Retrieval-Augmented Generation (RAG) .

- Retrieval-augmented generation (RAG): Enhance responses with information from relevant documents .

4. Model Evaluation and Responsible AI

Google Cloud’s commitment to responsible AI is evident in Vertex AI’s evaluation capabilities:

Vertex AI’s evaluation capabilities :

- Model Evaluation: Compare models across metrics like quality, toxicity, and groundedness.

- Safety filters: Built-in systems to prevent harmful content generation.

- Explanation tools: Understand how models reach specific conclusions.

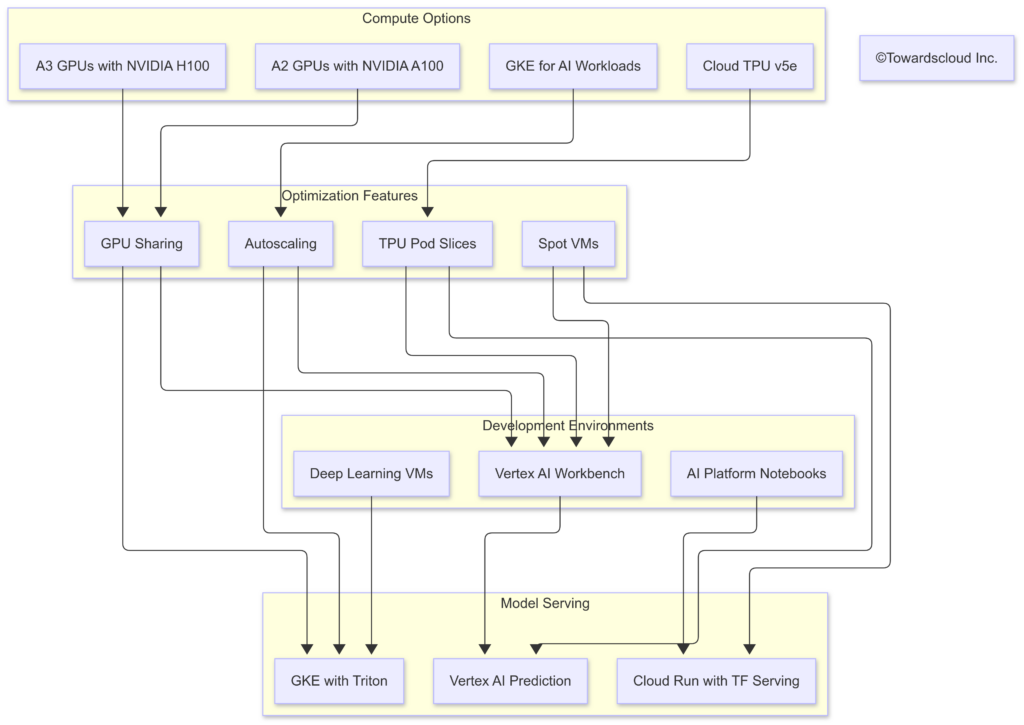

AI Infrastructure: Powering the Generative AI Revolution

Google Cloud provides specialized infrastructure optimized for generative AI workloads:

- Cloud TPU v5e: Google’s purpose-built Tensor Processing Units (TPUs) offer up to 2x better performance-per-dollar compared to previous generations and are optimized for both training and inference of large language models . They are available in pod configurations for massive parallelization.

- A3 GPU Instances with NVIDIA H100: For those preferring GPU-based computing, Google Cloud offers A3 instances powered by NVIDIA H100 Tensor Core GPUs. Each A3 machine can contain up to 8 H100 GPUs, interconnected with NVLink and NVSwitch for ultra-fast GPU-to-GPU communication and supported by Google’s networking infrastructure for efficient multi-node training.

- Compute Engine: Provides virtual machines for running various AI workloads .

- Cloud Storage: Offers secure and scalable object storage for large datasets .

- Google Kubernetes Engine (GKE): Provides a managed environment for running containerized AI and machine learning workloads, offering scalability and flexibility .

Cost Optimization Strategies

Google Cloud provides several approaches to manage the costs associated with generative AI workloads:

- Spot VMs: Take advantage of unused capacity at significant discounts (up to 91%).

- Capacity reservations: Secure resources for planned workloads.

- Custom machine types: Pay only for the CPU and memory you need.

- GKE Autopilot: Automated cluster management to optimize resource utilization.

Specialized Solutions Built on Generative AI

Google Cloud has developed several industry-specific solutions that leverage generative AI capabilities:

Document AI

Document AI applies generative AI to document processing workflows:

- Extract structured data from unstructured documents

- Summarize lengthy documents into concise overviews

- Generate responses to queries about document contents

- Create document templates based on existing examples

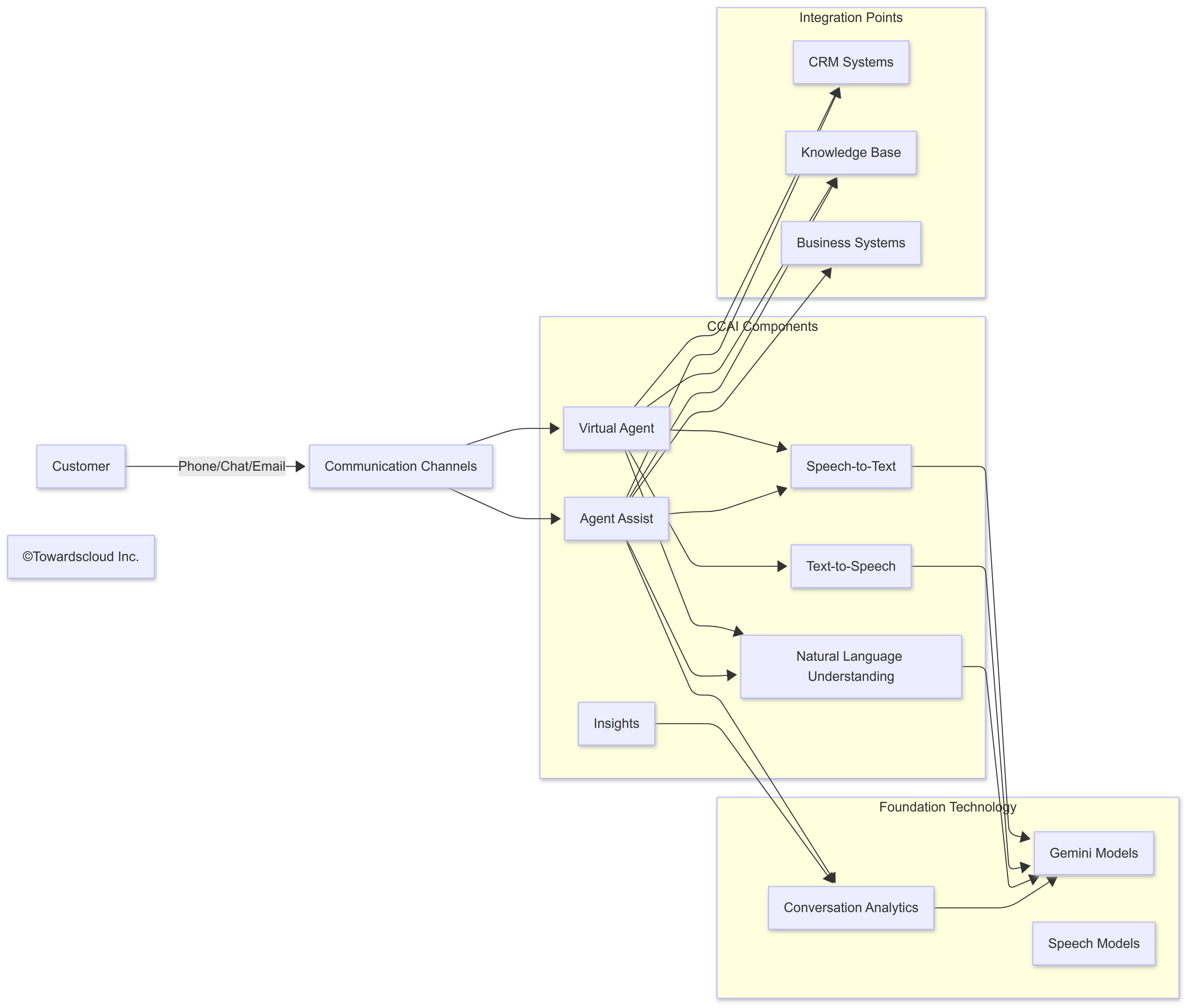

Contact Center AI (CCAI)

CCAI enhances customer service operations with generative AI:

- Virtual agents that handle routine inquiries with natural conversation

- Agent assist features that provide real-time guidance to human agents

- Post-call summarization and analysis

- Intent detection and sentiment analysis

A telecommunications company implemented CCAI and saw their first-contact resolution rate improve by 27%, while reducing average handle time by 41 seconds across all customer interactions. The system’s ability to understand complex customer descriptions of technical issues proved particularly valuable.

Medical Imaging AI

One of the most impactful specialized applications is in healthcare imaging:

- Analysis of radiological images to detect anomalies

- Automatic report generation based on imaging findings

- Prioritization of urgent cases in radiology workflows

- Longitudinal tracking of changes across multiple scans

Retail Product Recommendations

Retail companies benefit from generative AI through:

- Personalized product descriptions based on customer preferences

- Dynamic bundle suggestions that evolve with inventory and trends

- Natural language search capabilities that understand intent

- Content generation for marketing campaigns and product launches

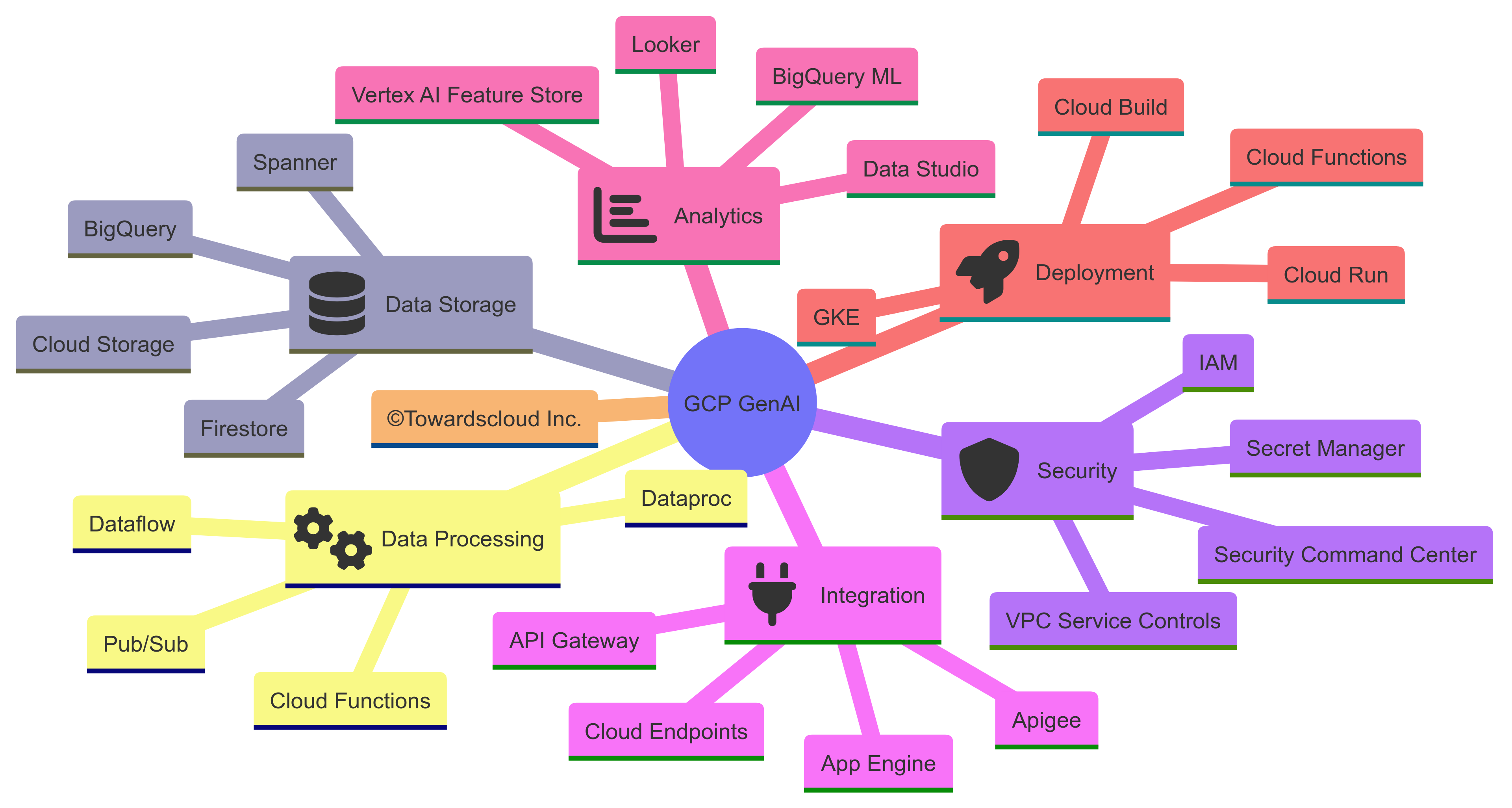

Integration with the Broader GCP Ecosystem

A key strength of Google’s generative AI offering is its seamless integration with other GCP services:

BigQuery Integration

The integration between Vertex AI and BigQuery is particularly powerful:

- Run generative AI inferences directly within SQL queries

- Process massive datasets without moving them between systems

- Combine traditional analytics with generative capabilities

- Pipeline outputs directly to visualization tools like Looker

Google Workspace Integration

For organizations already using Google Workspace, the generative AI capabilities integrate directly with familiar tools:

- Generate content directly within Google Docs

- Create presentations in Slides based on natural language descriptions

- Summarize and analyze data in Sheets

- Draft and refine emails in Gmail

API Management with Apigee

For organizations looking to monetize or share their generative AI applications, Apigee provides:

- API governance and security

- Rate limiting and quotas

- Developer portals

- Monetization frameworks

- Analytics on API usage

Real-World Implementation Patterns

Several common patterns have emerged:

1. The Hybrid Approach

Most successful implementations combine pre-trained foundation models with enterprise-specific data:

Foundation Model (Gemini Pro) + Enterprise Data (via Vector Search) → Custom GenAI SolutionFor example, a financial services client used this approach to create a compliance assistant that could reference their internal policies and regulatory guidelines while leveraging Gemini’s general language capabilities.

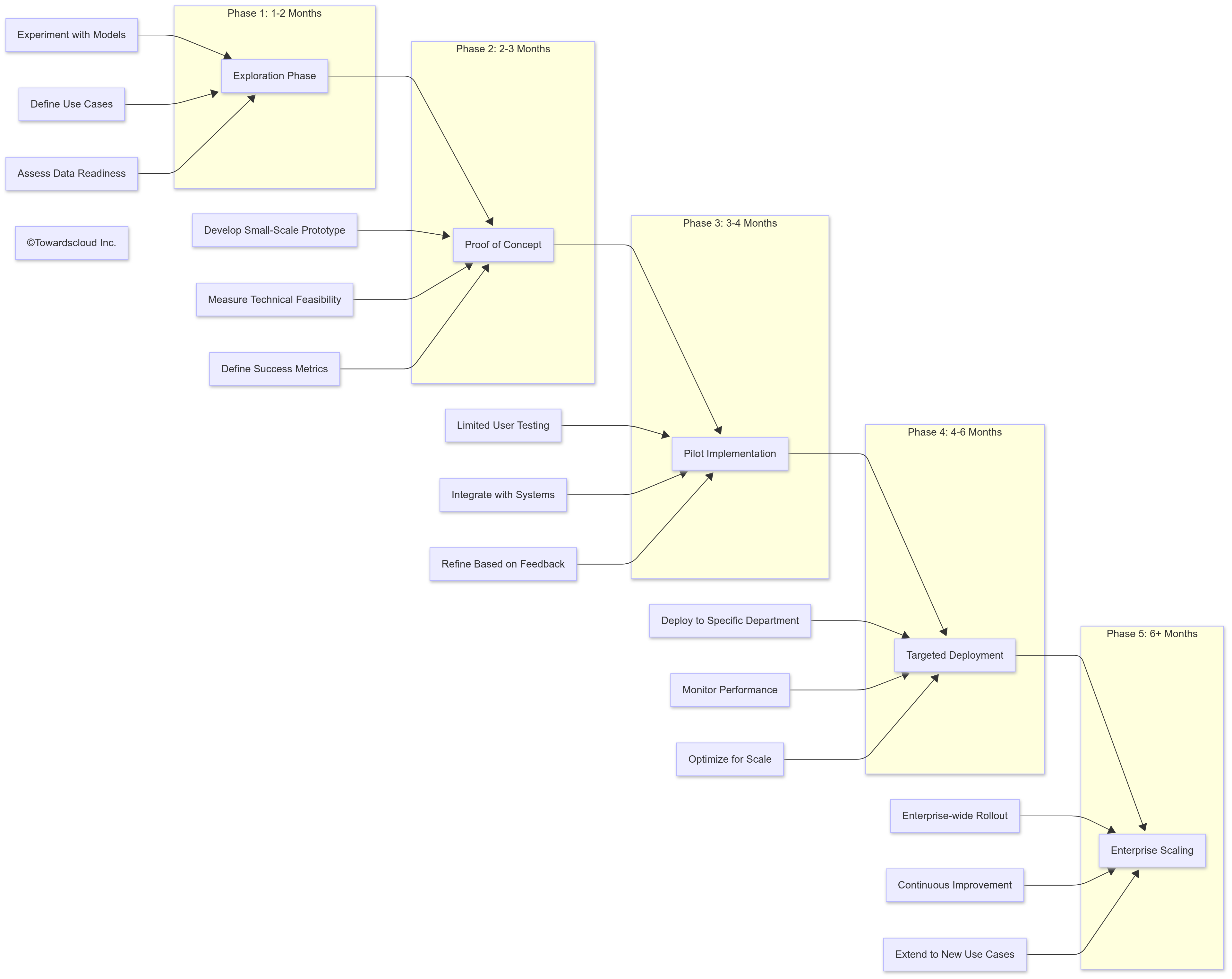

2. The Progressive Implementation

Rather than attempting a comprehensive generative AI transformation, successful organizations typically follow this progression:

3. The Center of Excellence Model

Organizations seeing the most success with generative AI on GCP typically establish a Center of Excellence (CoE) that:

- Develops governance frameworks for responsible AI use

- Creates reusable components and patterns

- Provides training and support for implementation teams

- Evaluates new models and capabilities as they become available

- Measures and reports on business impact

Cost Considerations

Understanding the cost structure of generative AI on GCP is crucial for planning and budgeting:

Model Usage Costs

- Foundation Model API calls: Charged per 1,000 input tokens and 1,000 output tokens

- Vertex AI Training: Charged per node hour based on accelerator type

- Vertex AI Prediction: Charged based on provisioned capacity or per-request

Infrastructure Costs

- Computing resources: TPUs, GPUs, CPUs

- Storage: Vector databases, model artifacts, training data

- Networking: Data transfer, API calls

Cost Optimization Strategies

Several strategies can help manage generative AI costs on GCP:

- Right-size models: Use the smallest model that meets your quality requirements

- Optimize prompts: Shorter prompts reduce token consumption

- Cache common responses: Avoid regenerating identical content

- Leverage spot instances: For non-critical workloads

- Monitor usage patterns: Identify and address inefficient implementations

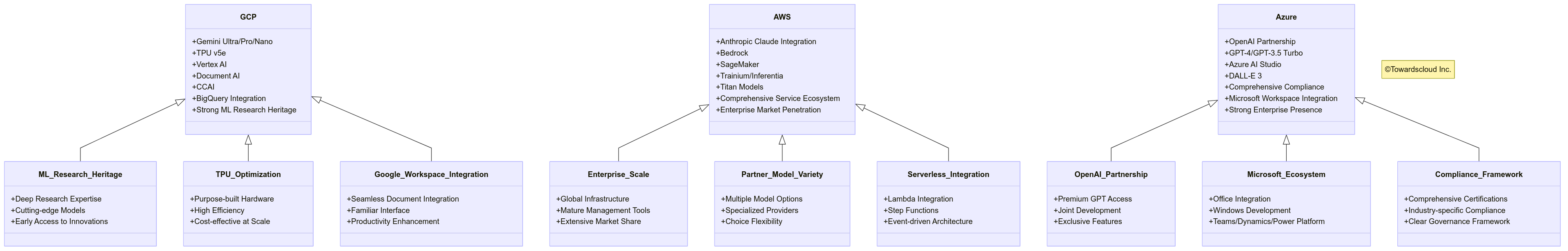

Comparison with Other Cloud Providers

While each cloud provider has strengths, Google Cloud offers distinct advantages for generative AI:

Key GCP Differentiators

- Research Leadership: Google’s deep research expertise in AI translates to cutting-edge models and features

- Integrated AI Stack: From TPUs to high-level APIs, Google provides a complete, optimized stack

- Multimodal Capabilities: Native support for text, image, video, and audio in a single model architecture

- Enterprise Controls: Robust governance, monitoring, and explainability features

Future Directions for GCP GenAI

Several emerging trends suggest where Google Cloud’s generative AI offerings are headed:

1. Agent-Based Architectures

Moving beyond simple prompt-response patterns to persistent agents that can:

- Maintain conversation context across sessions

- Execute multi-step tasks autonomously

- Interact with various systems through API integrations

- Learn from past interactions to improve performance

2. Domain-Specific Optimization

More specialized versions of foundation models optimized for:

- Industry-specific terminology and concepts

- Regulatory compliance in regulated industries

- Technical domains like software development

- Creative fields like marketing and design

3. Enhanced Composability

The ability to combine AI capabilities in flexible ways:

- Modular AI components that can be assembled like building blocks

- Specialized models working together through orchestration layers

- Custom pipelines combining multiple AI techniques

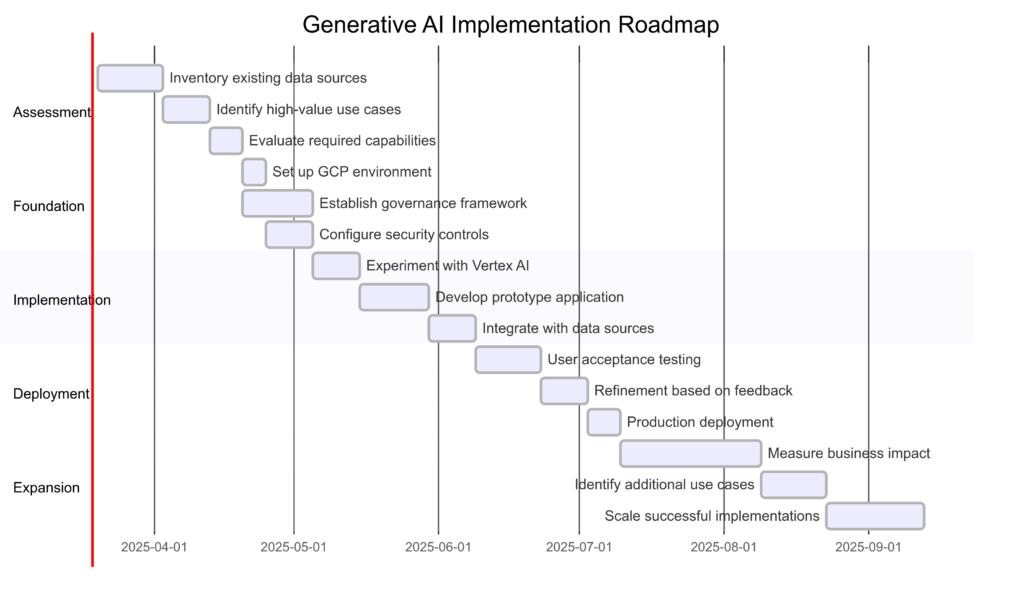

Getting Started with GenAI on GCP

For organizations looking to begin their generative AI journey on Google Cloud, following is the recommend this approach:

1. Explore and Experiment

Begin with Google’s no-code tools to build familiarity:

- Use Vertex AI Studio to experiment with different models

- Try prompt variants to understand model capabilities

- Test small datasets to assess performance on your specific use cases

2. Develop Skills

Build the necessary expertise within your organization:

- Google Cloud’s generative AI learning path provides structured training

- Practical exercises with Colab and Vertex AI Workbench build hands-on skills

- Focus on prompt engineering as a critical foundation skill

3. Build a Proof of Concept

Select a well-defined, high-value use case:

- Choose a problem with clear success metrics

- Ensure sufficient data availability

- Select use cases where some imperfection is acceptable

- Focus on augmenting human capabilities rather than replacing them

Conclusion: The Strategic Advantage of GCP for Generative AI

Google Cloud Platform offers a compelling combination of technological innovation, practical tools, and enterprise-grade infrastructure for organizations looking to implement generative AI. The integration of cutting-edge research with accessible development environments creates a platform that can support everything from experimental prototypes to production-scale applications.

For organizations beginning their generative AI journey, GCP provides a gradual on-ramp through tools like Vertex AI Studio, while those ready for more sophisticated implementations can leverage the full power of Google’s AI infrastructure and custom model development capabilities.

As generative AI continues to evolve, Google’s deep research heritage positions GCP to remain at the forefront of innovation, providing organizations with access to the latest advances through their cloud platform. The combination of foundation models, specialized solutions, and integration with the broader GCP ecosystem creates a comprehensive environment for building the next generation of AI-powered applications.

Whether you’re just beginning to explore generative AI or looking to scale existing implementations, Google Cloud Platform provides the tools, infrastructure, and expertise to help you succeed in this transformative technology landscape.

This article is part of our ongoing series exploring cloud technologies and their real-world applications. For more insights on implementing cloud solutions, visit TowardsCloud.com.