Generative AI is transforming the world as we know it, from creating stunningly realistic images to composing music and even writing code. But who are the minds behind this revolution? This isn’t a story of a single “Eureka!” moment, but rather a decades-long journey of incremental breakthroughs, driven by passionate researchers, engineers, and mathematicians. This post delves into the history, key figures, and foundational concepts that have paved the way for the generative AI boom we’re experiencing today. We will introduce you to the unsung heros.

Think of it this way: if Generative AI were a magnificent cathedral, this post explores the architects, stonemasons, and artists who laid the foundation and built the soaring arches, even if they never saw the finished masterpiece.

Why Should You Care?

Understanding the history of Generative AI isn’t just for academics. It’s crucial for several reasons:

- Appreciating the Complexity: Recognizing the decades of work behind these “overnight successes” gives us a deeper appreciation for the technology.

- Understanding the Limitations: Knowing the underlying principles helps us understand why generative AI sometimes makes mistakes or produces unexpected results.

- Predicting the Future: By seeing the trajectory of progress, we can better anticipate where the field is heading.

- Demystifying the “Magic”: It’s easy to see AI as magic, but understanding the underlying principles makes it less intimidating and more accessible.

- Inspiring Innovation: Learning about the pioneers can spark new ideas and inspire the next generation of AI researchers.

A Journey Through Time: The Foundations

The roots of Generative AI stretch back further than you might think. Let’s start with the foundational concepts:

1. Early Statistics and Probability (17th-19th Centuries):

- Concept: The very idea of generating something “new” relies on understanding probability and distributions. Think of rolling dice – you’re generating a random number, but within a defined set of possibilities.

- Key Figures:

- Blaise Pascal & Pierre de Fermat: Laid the foundations of probability theory. [Link to: https://mathshistory.st-andrews.ac.uk/Biographies/Pascal/] [Link to: https://mathshistory.st-andrews.ac.uk/Biographies/Fermat/]

- Thomas Bayes: Developed Bayes’ theorem, crucial for understanding conditional probability (how the probability of an event changes based on new evidence). [Link to: https://plato.stanford.edu/entries/bayes-theorem/]

- Andrey Markov: Introduced Markov chains, a way to model sequences of events where the next event depends only on the current state (like predicting the weather based only on today’s weather). [Link to: https://www.britannica.com/biography/Andrey-Andreyevich-Markov]

- Real-Life Example: Imagine predicting the next word in a sentence. If you see “The cat sat on the…”, you’re more likely to predict “mat,” “couch,” or “chair” than “airplane” or “banana.” This is basic probability at work.

2. Early Computing and Artificial Intelligence (Mid-20th Century):

- Concept: The development of computers provided the necessary hardware to implement complex algorithms. Early AI researchers explored the idea of machines that could “think” and “learn.”

- Key Figures:

- Alan Turing: Proposed the Turing Test, a benchmark for machine intelligence. Also, a key figure in developing early computers. [Link to: https://www.turing.org.uk/]

- John von Neumann: Developed the von Neumann architecture, the foundation of most modern computers. [Link to: https://www.ias.edu/von-neumann]

- Arthur Samuel: Created one of the first self-learning programs, a checkers-playing program. [Link to: https://www.computerhistory.org/fellowawards/hall/arthur-l-samuel/]

- Real-Life Example: Early chess-playing programs, while not “generative” in the modern sense, demonstrated the ability of computers to learn and make decisions based on data.

3. Neural Networks: The First Wave (1940s-1960s):

- Concept: Inspired by the structure of the human brain, neural networks are interconnected nodes (neurons) that process information. Early models like the Perceptron showed promise but had limitations.

- Key Figures:

- Warren McCulloch & Walter Pitts: Proposed the first mathematical model of a neuron. [Link to: https://www.cs.cmu.edu/~./epxing/Class/10715/reading/McCulloch.and.Pitts.pdf]

- Frank Rosenblatt: Developed the Perceptron, a simple neural network capable of learning to classify patterns. [Link to: https://medium.com/@robdelacruz/frank-rosenblatts-perceptron-19fcce9d627f]

- Real-Life Example: Imagine a simple network that learns to distinguish between images of cats and dogs. It might learn that pointy ears are a feature of cats, while floppy ears are a feature of dogs.

- Limitation: The Perceptron could only learn linearly separable patterns. This means it couldn’t solve problems like the XOR problem (where the output is 1 only if the two inputs are different). This limitation led to the first “AI winter.”

4. The AI Winter(s) (1970s-1990s):

- Concept: Periods of reduced funding and interest in AI research due to unmet expectations and limitations of existing techniques. Early hype didn’t match reality.

- Reasoning: Researchers realized that the early approaches were too limited to solve complex problems. Symbolic AI, which relied on explicit rules, struggled with the messiness of real-world data. Neural networks were hampered by the limitations of the Perceptron and the lack of computing power.

- Real-Life Example: Imagine trying to teach a computer to understand natural language using only a set of grammar rules. It would quickly become overwhelmed by the nuances and exceptions of human language.

5. Backpropagation and the Second Wave of Neural Networks (1980s-1990s):

- Concept: Backpropagation is an algorithm that allows neural networks to learn from their errors. It’s like adjusting the knobs on a radio to get a clearer signal. The “knobs” in a neural network are the weights of the connections between neurons.

- Key Figures:

- Geoffrey Hinton: A central figure in the development and popularization of backpropagation. [Link to: https://www.cs.toronto.edu/~hinton/]

- Yann LeCun: Pioneered the use of convolutional neural networks (CNNs) for image recognition. [Link to: http://yann.lecun.com/]

- Yoshua Bengio: Made significant contributions to recurrent neural networks (RNNs) and language modeling. [Link to: https://yoshuabengio.org/] * David E. Rumelhart, Geoffrey E. Hinton, and Ronald J. Williams: The authors of the groundbreaking 1986 paper “Learning representations by back-propagating errors,” which popularized backpropagation. [Link to: https://www.nature.com/articles/323533a0]

- Real-Life Example: Imagine a neural network trying to identify handwritten digits. If it misclassifies a “7” as a “1,” backpropagation adjusts the network’s weights to make it more likely to correctly classify “7”s in the future.

6. The Rise of the Internet and Big Data (1990s-2000s):

- Concept: The explosion of the internet provided vast amounts of data, which is essential for training powerful AI models. “Big Data” became a buzzword.

- Impact: Neural networks, which had previously been limited by the lack of data, could now be trained on massive datasets, leading to significant performance improvements.

- Real-Life Example: Companies like Google used the vast amount of text on the web to train language models that could translate languages, answer questions, and generate text.

7. Deep Learning Revolution (2010s-Present):

- Concept: “Deep” neural networks, with many layers, became feasible due to increased computing power (especially GPUs) and the availability of large datasets. These networks could learn complex, hierarchical representations of data.

- Key Breakthroughs:

- ImageNet Competition (2012): AlexNet, a deep convolutional neural network, achieved a dramatic improvement in image classification accuracy, marking a turning point for deep learning. [Link to: https://papers.nips.cc/paper/2012/file/c399862d3b9d6b76c8436e924a68c45b-Paper.pdf]

- Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM): These networks excelled at processing sequential data, like text and speech. [Link to: https://www.bioinf.jku.at/publications/older/2604.pdf]

- Generative Adversarial Networks (GANs) (2014): A breakthrough in generative modeling, allowing the creation of highly realistic images, videos, and audio. [Link to: https://arxiv.org/abs/1406.2661]

- Transformers (2017): A new architecture that revolutionized natural language processing, leading to models like GPT-3 and BERT. [Link to: https://arxiv.org/abs/1706.03762]

- Real-Life Example: Deep learning powers many applications we use daily, from image recognition in our phones to speech assistants like Siri and Alexa.

The Generative AI Pioneers: A Closer Look

Now, let’s dive into the specific architectures and the inventors who brought them to life:

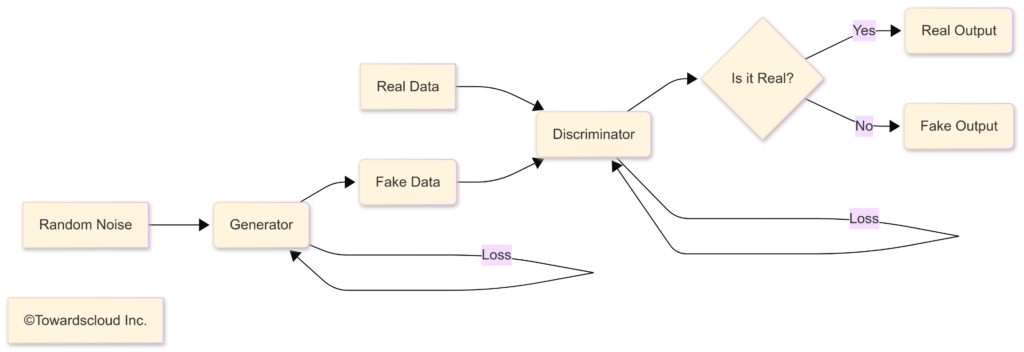

A. Generative Adversarial Networks (GANs):

- Inventor: Ian Goodfellow (and his colleagues at the University of Montreal) [Link to: https://www.iangoodfellow.com/]

- Year: 2014

- Concept: GANs consist of two neural networks: a generator that creates new data instances, and a discriminator that tries to distinguish between real data and the generated data. They are trained in an adversarial process, like a game of cat and mouse.

- Analogy: Imagine a forger (generator) trying to create fake paintings, and an art expert (discriminator) trying to spot the fakes. Over time, the forger gets better at creating realistic fakes, and the expert gets better at detecting them.

Key Contributions of Ian Goodfellow and GANs

| Feature | Description | Real-Life Example |

|---|---|---|

| Invention | Generative Adversarial Networks (GANs) | N/A |

| Concept | Two networks (Generator and Discriminator) competing, leading to increasingly realistic data generation. | Forger (Generator) vs. Art Expert (Discriminator) |

| Generator | Creates new data instances from random noise. | Creating a realistic image of a face that doesn’t exist. |

| Discriminator | Evaluates data instances, distinguishing between real and generated data. | Determining if an image is a real photograph or a GAN-generated image. |

| Adversarial Training | The Generator and Discriminator are trained simultaneously, with each trying to outsmart the other. | The forger constantly improves its fakes, while the expert becomes better at spotting them. |

| Applications | Image generation, video generation, text-to-image synthesis, drug discovery, anomaly detection, style transfer, super-resolution, data augmentation, and many more. | Generating realistic product images for e-commerce, creating deepfakes (with ethical concerns), enhancing old photos, designing new molecules for medicines. |

| Impact | Revolutionized generative modeling; opened new frontiers in AI creativity and data synthesis. | Creating art, assisting in design processes, generating synthetic data for training other AI models. |

| Limitation | Training can be unstable(Mode Collaps, Non-convergence, vanishing Gradients); difficult to control the generated output; potential for misuse (e.g., deepfakes). | Generating thousands of similar images with little variation; difficulty generating images with specific, controlled features. |

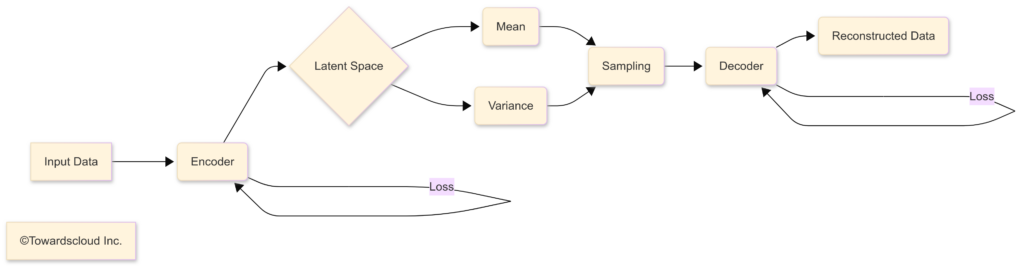

B. Variational Autoencoders (VAEs):

- Inventors: Diederik P. Kingma and Max Welling [Link to: https://arxiv.org/abs/1312.6114]

- Year: 2013

- Concept: VAEs are a type of autoencoder, a neural network that learns to compress and then reconstruct data. VAEs add a probabilistic twist, forcing the learned representation to follow a specific distribution (usually a Gaussian distribution). This allows for generating new data by sampling from this distribution.

- Analogy: Imagine a machine that learns to draw faces. A regular autoencoder might learn a specific set of features (e.g., “big eyes,” “small nose”). A VAE learns a range of possibilities for each feature (e.g., “eyes can be this big or this small,” “noses can be this long or this short”). This allows it to generate more diverse and novel faces.

Key Contributions of Kingma and Welling, and VAEs

| Feature | Description | Real-Life Example |

|---|---|---|

| Invention | Variational Autoencoders (VAEs) | N/A |

| Concept | Autoencoders with a probabilistic latent space, allowing for controlled generation of new data. | Learning a “space” of facial features and generating new faces by sampling from that space. |

| Encoder | Compresses the input data into a lower-dimensional latent space representation. | Representing an image of a face as a set of numbers representing key features. |

| Latent Space | A compressed representation of the data, often following a Gaussian distribution. | A multi-dimensional space where each point represents a possible face. |

| Decoder | Reconstructs the original data from the latent space representation. | Generating an image of a face from the set of numbers representing its features. |

| Probabilistic Nature | Introduces randomness into the encoding and decoding process, enabling the generation of new data instances. | Allows for generating variations of a face, rather than just reconstructing the original. |

| Applications | Image generation, anomaly detection, data denoising, dimensionality reduction, drug discovery, music generation. | Generating new molecules with desired properties, creating variations of musical melodies. |

| Impact | Provided an alternative approach to generative modeling with better training stability and control compared to early GANs. | Creating variations in generated data; interpolating between different data points in the latent space. |

| Limitation | Generated images can be blurry compared to GANs; controlling specific features can still be challenging. | Difficulty generating highly detailed and sharp images; less intuitive control over specific attributes. |

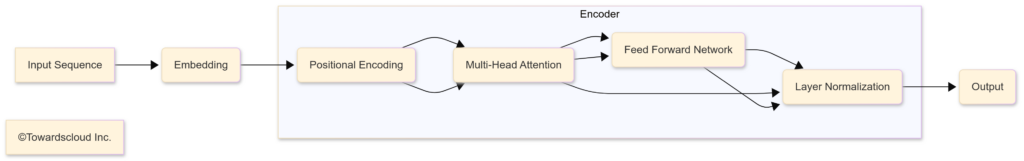

C. Transformers and Attention Mechanisms:

- Inventors: Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, and Illia Polosukhin (Google Brain team) [Link to: https://arxiv.org/abs/1706.03762]

- Year: 2017

- Concept: The Transformer architecture relies on the attention mechanism, which allows the model to focus on different parts 1 of the input sequence when processing it. This is a departure from previous recurrent models (like LSTMs) that processed data sequentially. Transformers can process the entire input sequence in parallel, making them much faster to train.

Analogy: Imagine reading a long sentence. You don’t process each word with equal importance. You pay more attention to the key words that carry the most meaning. The attention mechanism does the same for a neural network. For example when translating “The cat, which was fluffy, sat on the mat”, a transformer can focus on the linked connection between “cat” and “sat”.

Key Aspects of Transformers and Attention

| Feature | Description | Real-Life Example |

|---|---|---|

| Invention | Transformer Architecture | N/A |

| Concept | Uses attention mechanisms to process input sequences in parallel, focusing on relevant parts. | Reading a sentence and focusing on the most important words. |

| Attention Mechanism | Allows the model to weigh the importance of different parts of the input sequence when processing it. | Paying more attention to the subject and verb of a sentence than to prepositions. |

| Self-Attention | A specific type of attention where the model attends to different parts of the same input sequence. | Understanding the relationship between words in a sentence (e.g., “cat” and “sat” in “The cat sat on the mat”). |

| Parallel Processing | Transformers can process the entire input sequence at once, unlike recurrent models that process sequentially. | Reading the entire sentence at once, rather than word by word. |

| Applications | Machine translation, text summarization, question answering, text generation, image captioning, code generation, and many more. | Google Translate, chatbots, code completion tools, large language models like GPT-3, LaMDA, and BERT. |

| Impact | Revolutionized NLP; significantly improved performance on many tasks; enabled the creation of extremely large and powerful language models. | Improved accuracy and fluency of machine translation; enabled more natural and coherent conversations with chatbots; powering sophisticated AI writing tools. |

| Limitation | Can be computationally expensive to train; require large amounts of data; can struggle with very long sequences; interpretability can be a challenge. | Training models like GPT-3 requires massive computing resources; may generate text that is factually incorrect or biased. |

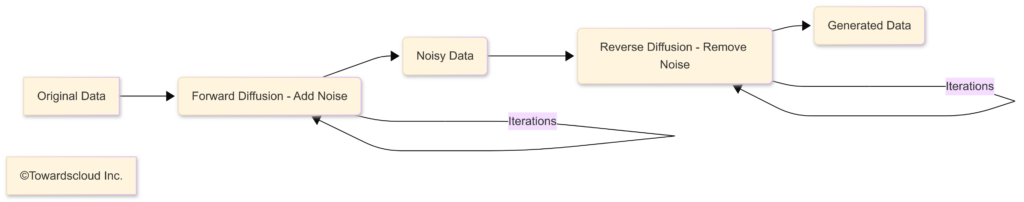

D. Diffusion Models:

- Inventors: Key contributors include Jascha Sohl-Dickstein, Erik Winfree, and Andras Kornai (initial theoretical work), and later Jonathan Ho, Diederik P. Kingma, Tim Salimans, and others who significantly advanced the practical application and efficiency of diffusion models. [Link to Initial Paper:https://arxiv.org/abs/1503.03585] [Link to DDPM: https://arxiv.org/abs/2006.11239]

- Year: Theoretical foundations laid earlier, but major practical advancements around 2020.

- Concept: Diffusion models work by gradually adding noise to data (the “forward diffusion process”) until it becomes pure noise, and then learning to reverse this process (the “reverse diffusion process”) to generate new data from noise.

- Analogy: Imagine taking a clear photograph and slowly blurring it, pixel by pixel, until it’s just a random mess of colors. A diffusion model learns how to unblur that mess, step by step, to reconstruct the original image, or even create entirely new images.

- How Diffusion Models work

Key Aspects of Diffusion Models

| Feature | Description | Real-Life Example |

|---|---|---|

| Invention | Diffusion Models | N/A |

| Concept | Generate data by reversing a process of gradually adding noise. | Like unblurring an image step-by-step. |

| Forward Diffusion | Adds noise to the data until it becomes pure noise. | Gradually blurring a photograph until it’s unrecognizable. |

| Reverse Diffusion | Learns to remove the noise, step-by-step, to generate data. | Reconstructing the original photo, or creating a new one, from the noise. |

| Applications | Image generation, text-to-image synthesis, image editing, audio generation, video generation, molecular design. | Creating high-quality images from text descriptions (DALL-E 2, Stable Diffusion, Imagen). |

| Impact | Achieved state-of-the-art results in image generation, often surpassing GANs in quality and fidelity; more stable training than GANs. | Creating extremely realistic and detailed images; providing fine-grained control over generated content. |

| Limitation | Can be slow to generate data (requires many sampling steps); computationally expensive to train. | Generating a single high-resolution image can take significant time; requires powerful hardware. |

Putting It All Together: The Generative AI Landscape

| Model | Inventors | Year | Strengths | Weaknesses | Main Applications |

|---|---|---|---|---|---|

| GANs | Ian Goodfellow et al. | 2014 | Fast generation; high-quality images (in some cases). | Training instability (mode collapse, vanishing gradients); difficult to control output. | Image generation, video generation, style transfer, data augmentation. |

| VAEs | Diederik P. Kingma, Max Welling | 2013 | Stable training; latent space representation allows for controlled generation and interpolation. | Generated images can be blurry; less intuitive control over specific features than GANs. | Image generation, anomaly detection, data denoising, dimensionality reduction. |

| Transformers (for Gen.) | Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin | 2017 | Excellent for text and sequential data; parallel processing; strong performance on many NLP tasks. | Computationally expensive to train; require large datasets; can struggle with very long sequences. | Text generation, machine translation, code generation, music generation. |

| Diffusion Models | Jascha Sohl-Dickstein et al. (theoretical foundations), Jonathan Ho, Diederik P. Kingma, Tim Salimans et al. (practical advancements) | 2020 | State-of-the-art image quality; stable training; more control over generation process. | Slow generation (many sampling steps); computationally expensive to train. | Image generation, text-to-image synthesis, image editing, audio generation, video generation. |

The Future of Generative AI

The field of Generative AI is still rapidly evolving. Here are some key trends and future directions:

- Multimodal Models: Models that can generate and understand multiple modalities of data (text, images, audio, video) simultaneously. This is like a human being able to understand and express themselves through words, pictures, and sounds. Example: DALL-E 2 can take text input and create the correlated image.

- Controllability and Customization: Giving users more fine-grained control over the generated output. This is like being able to specify not just “a cat,” but “a fluffy orange tabby cat sitting on a red cushion in a sunny window.”

- Efficiency and Scalability: Making generative models smaller, faster, and less resource-intensive to train and deploy. This is crucial for making the technology accessible to a wider range of users and applications.

- Ethical Considerations: Addressing the potential for misuse of generative AI, such as deepfakes and misinformation. This requires developing methods for detecting generated content and promoting responsible use of the technology.

- Explainability and Interpretability: Understanding why a generative model produces a particular output. This is important for building trust and ensuring fairness.

- Real-World Applications: Continued expansion into diverse fields, including:

- Drug Discovery: Designing new molecules with specific properties.

- Materials Science: Discovering new materials with desired characteristics.

- Art and Entertainment: Creating new forms of art, music, and interactive experiences.

- Education: Personalized learning tools and content generation.

- Scientific Research: Generating hypotheses and simulations.

Conclusion: Standing on the Shoulders of Giants

The generative AI revolution is built upon the contributions of many brilliant minds, spanning decades of research. From the early pioneers of probability and computing to the inventors of GANs, VAEs, Transformers, and Diffusion Models, these individuals have laid the foundation for a technology that is transforming our world. As we continue to push the boundaries of what’s possible, it’s essential to remember and appreciate the “shoulders of giants” upon which we stand. Their work inspires us to continue exploring, innovating, and shaping the future of AI in a responsible and beneficial way. The story of Generative AI is far from over; it’s just beginning. And by understanding its history, we can better navigate its future.